Spring 2026 Tech Pulse: From Chips to Cameras and the Devices Rewriting the Playbook

If you’ve been watching the tech landscape this spring, you can feel something shifting. We’re not just talking about incremental updates or spec bumps. The second quarter of 2026 is shaping up as one of those rare moments when hardware, silicon, and industry standards all seem to converge at once, fundamentally changing how we’ll interact with computing for years to come.

In a flurry of announcements and product launches that feels almost orchestrated, established giants and ambitious newcomers are staking fresh claims across artificial intelligence, augmented reality, and the smart home. For developers, engineers, and anyone building tech products, the message is becoming impossible to ignore: the future won’t be built in the cloud alone, but at the messy, exciting intersection of specialized chips, on-device intelligence, and widely supported standards.

The AI Hardware Conversation Gets Real

Nvidia’s CEO Jensen Huang framed much of this conversation during his GTC 2026 keynote, where partnerships spanned everything from autonomous vehicles and entertainment to advanced robotics. Huang’s presentation drove home a point that’s been building for a while: AI isn’t just software anymore. It’s become a full systems problem that demands tailored hardware and tight integration across the stack.

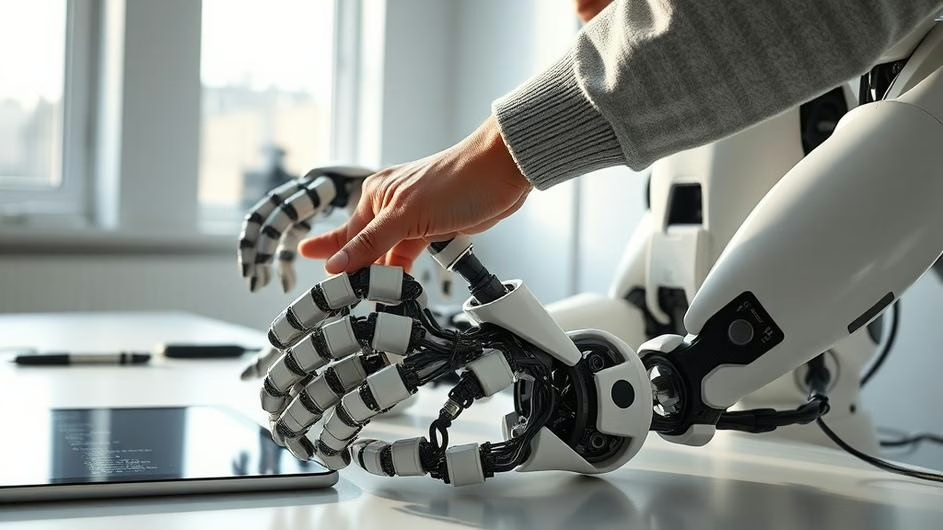

That message landed with particular force alongside a striking demo from Disney Imagineering, which used lifelike robotics to show how AI animation, sensing, and actuation can come together to create entirely new experiences. It wasn’t just about faster processing, but about rethinking what’s possible when hardware and software are designed in tandem from the ground up.

This shift toward specialized AI hardware is accelerating faster than many predicted. One of the more interesting supply chain moves reported this spring has Samsung stepping into chip manufacturing for AI inference silicon, producing Groq 3 parts for Nvidia. These inference chips are built specifically to run trained machine learning models efficiently, focusing on executing predictions rather than the heavy lifting of training.

Why does this matter? Because specialized silicon for edge and datacenter inference lets companies push AI workloads closer to users, slashing latency and cost while opening up entirely new device form factors. It’s a trend that’s quietly reshaping everything from smartphones to smart factories.

Samsung’s Two-Front Strategy

Samsung is playing an interesting double game right now. While manufacturing chips for others, they’re also aggressively expanding their own device portfolio. The new Galaxy A37 and A57 are turning heads with their aggressive pricing in markets like Thailand, illustrating a broader strategy of staking out midrange value as premium features migrate downmarket.

At the same time, Samsung continues to iterate on software and user experience, adding features to One UI and experimenting with hardware innovations like privacy-aware displays. These moves matter because they signal how quickly high-end functionality can propagate into mainstream devices, and how pricing pressures are shaping global adoption patterns. Could we be seeing the beginning of a new wave of affordable, AI-capable devices that bring advanced features to broader audiences?

Smart Homes Finally Get Smarter

Smart home interoperability took what might be its most significant step forward yet with the arrival of the first Matter-compatible security camera, the Aqara Camera Hub G350, now shipping after its CES unveiling. Matter is that industry standard we’ve been hearing about for years, designed to make smart home devices work reliably across different ecosystems by simplifying discovery, security, and control.

The G350 doubles as a hub, which feels like a sensible approach that collapses functions and reduces clutter while making Matter adoption practical for everyday consumers. For developers, this changes the integration game significantly, moving the focus from fragile, platform-specific APIs to a common foundation that should lower friction for cross-platform features. It’s one of those standards that, if widely adopted, could finally make smart homes feel less like a collection of incompatible gadgets and more like a cohesive system.

AR Sheds Its Niche Label

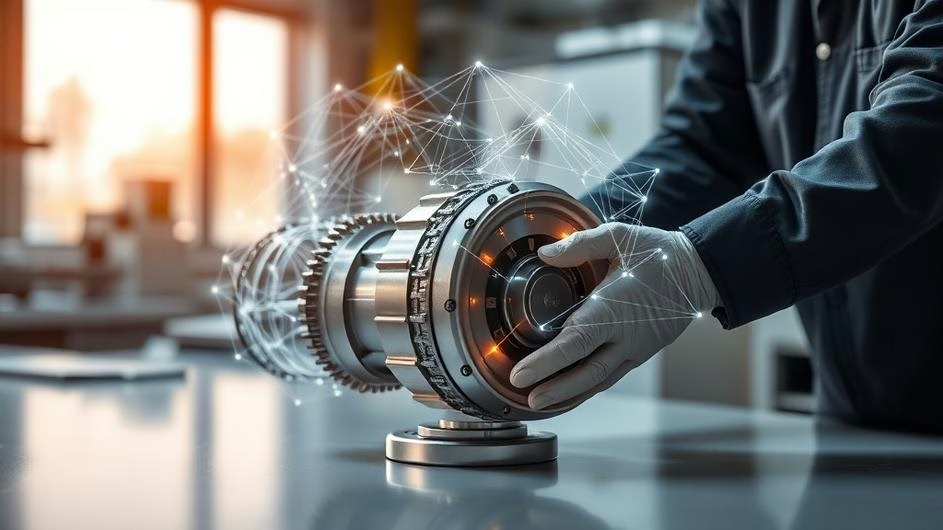

Meanwhile, augmented reality hardware is quietly shedding its niche label. 2026 has seen more consumer-focused entries than ever before, including Alibaba’s Qwen smart glasses and rumored collaborations that pair retail distribution with big tech design expertise. As we noted in our look at AR’s mainstream moment, the real shift is the emphasis on on-device AI.

This technology powers real-time translation, voice assistants, and contextual heads-up information without needing constant cloud round trips. On-device AI matters for both privacy and responsiveness, and it forces developers to fundamentally rethink app architecture, model size, and compute budgets so that experiences feel immediate and genuinely useful.

According to industry analysis, the top AR devices of 2026 are surprising buyers with their practicality and affordability. We’re moving beyond novelty and into tools that solve real problems, whether that’s helping technicians with hands-free instructions or providing travelers with instant translation overlays.

The Hardware Economics Ripple Effect

Those device economics ripple into other categories in interesting ways. Apple’s refresh of its MacBook Pro lineup with new M5 Pro and M5 Max chips pushed the prior M5 model down to record low prices. This is a familiar market dynamic, sure, but it has practical implications for developers who buy hardware for local development or compute tasks.

Older silicon becomes cost-effective surprisingly quickly, and for many workflows, the previous generation offers excellent performance per dollar. At the same time, the push toward more powerful, specialized chips means teams must balance investment in bleeding-edge hardware against the productivity gains of faster builds, shorter training loops, and more efficient local testing. It’s a calculation that’s becoming more complex as the AI hardware wave reshapes what’s possible on-device.

What This All Means for Builders

So what do these spring developments add up to for engineers and product builders? A few themes stand out that could define the next couple of years in tech.

Compute is fragmenting into distinct tiers, from cloud GPUs to edge inference chips and on-device accelerators. Each tier brings its own trade-offs in latency, privacy, and cost, and choosing the right layer for different parts of your application will increasingly shape the user experience. As we explored in our analysis of the inference layer battle, where you run your AI models is becoming as important as which models you choose.

Standards like Matter show that the industry can still rally around interoperability when it really matters. This reduces integration fragility and opens the door to richer ecosystems that benefit everyone, from developers to end users. It’s a reminder that sometimes the most impactful innovation isn’t a new feature, but better ways for existing technologies to work together.

AR and wearable devices are moving toward genuine mass adoption because they’re combining lower-cost hardware, smarter local AI, and clearer use cases like translation or contextual assistance. The devices are getting better, but just as importantly, people are starting to understand why they might want them.

Practical Next Steps for Developers

For developers looking to stay ahead of these shifts, the practical next steps are becoming clearer. Start optimizing your models for inference so they can run efficiently on dedicated chips and on-device accelerators. This isn’t just about performance, it’s about enabling new kinds of applications that work offline or with minimal latency.

Design with interoperability in mind from the beginning. Adopt standards like Matter for smart home projects to reach more users with less bespoke plumbing. Your future self will thank you when you’re not maintaining a dozen different API integrations.

Embrace progressive enhancement so features can scale down gracefully for midrange devices and scale up for high-end silicon. Not every user needs or can afford the latest hardware, but everyone deserves a good experience.

And maybe most practically, watch those pricing cycles. Opportunistic hardware upgrades can accelerate development at lower cost, giving your team access to better tools without blowing the budget. As new interfaces emerge, having the right hardware to test and develop on becomes increasingly important.

Looking Ahead

The spring lineup of 2026 isn’t just another set of product launches. It feels like a preview of an emerging architecture where intelligence is distributed, hardware is specialized by design, and standards work to reduce friction rather than create it.

That architecture will yield new classes of applications we’re only beginning to imagine, from private, low-latency AR assistants to unified smart home systems that actually just work. If you build software, now’s the time to start planning for that distribution, thinking about where your computation happens, and how your applications adapt to different hardware capabilities.

The experimental demos of today are becoming the everyday tools of tomorrow faster than many realize. The question isn’t whether this shift will happen, but whether you’ll be ready to build for it when it does.

Sources

- Samsung allegedly launches Galaxy A37 and A57; pricing is turning heads, Sammy Fans, Mon, 16 Mar 2026

- Highlights From Nvidia’s GTC 2026 Keynote With Jensen Huang, CNET, Mon, 16 Mar 2026

- The First Ever Matter Smart Security Camera Is Here, Forbes, Thu, 19 Mar 2026

- Top 7 AR Devices And Moves In 2026 That Surprise Buyers – Here’s Why, Glass Almanac, Mon, 16 Mar 2026

- M5 MacBook Pro With 14.2″ Liquid Retina XDR Display Drops to Record Low After Apple Launches M5 Pro and Max Models, Kotaku, Tue, 17 Mar 2026