AI at Scale: From Humanoid Factories to Encrypted Clouds and Grid-Friendly Data Centers

The last few months have felt like a laboratory that got fast-tracked into production. Humanoid robot companies are moving from building one-off prototypes to planning full assembly lines. Enterprise teams are scrambling to put AI to work. And a whole cluster of supporting technologies is quietly rearranging how we compute, how we secure data, and how we keep the lights on.

These threads look distinct on the surface. But they share a common logic: scaling intelligence means rethinking hardware, software, and infrastructure as one system, not three separate problems.

The Humanoid Production Problem

Take the rush to manufacture humanoid robots at scale. Startups that used to focus on perfecting a single unit are now talking about ramping to thousands of units. That changes everything. Engineers who once optimized one-off machines suddenly have to worry about yield rates, component sourcing, and managing software update fleets across an entire deployed base.

It exposes something uncomfortable: an AI stack can look elegant in the lab and turn fragile the moment it hits a factory floor. Production demands cross-disciplinary problem solving, from tactile sensors that can survive thousands of grasps to efficient inference chips that don’t melt the budget. The shift from prototype to production is where the real engineering begins.

Companies like Figure and 1X are already ramping up humanoid robot production, and the industry is watching closely to see which approaches scale.

Hands That Feel, Data That Learns

Robotic hands offer a great example of how hardware and data combine to unlock real capability. New efforts to give robot hands a sense of touch go way beyond better sensors. Researchers are building large, embodied datasets that let machine learning models understand contact, force, and subtle manipulation. Think about what it takes for a robot to pick up an egg without crushing it, or to handle a tool it has never seen before.

When you pair that kind of data with more reliable manufacturing, robots can start moving beyond scripted motions. They begin adapting to messy, real-world tasks. That has implications far beyond the factory. It is the kind of progress that could reshape everything from warehouse logistics to home assistance.

Security at the Foundation

While robotics pushes forward on the hardware front, enterprise teams are looking for shortcuts to adopt AI faster. Many turn to co-innovation deals with cloud and system providers. But speed comes with costs, both literal and operational.

Companies are waking up to a basic truth: patching security holes after the fact is expensive and unstable. You can’t bolt safety onto a system after it is deployed and expect it to hold. Durable defenses start with memory-safe code, a programming approach that prevents entire classes of bugs like buffer overflows before they ever appear. Investing in safer software up front reduces risk as AI systems enter critical business infrastructure and, eventually, physical infrastructure.

This matters for developers building on blockchain rails too. As crypto infrastructure converges with AI workloads, the same principles of secure coding apply. Smart contracts and AI inference pipelines both need to be built on solid, memory-safe foundations.

The Cryptographic Frontier

Security also has a new frontier in cryptography. Encrypted cloud platforms now promise to keep data encrypted even while it is being computed on. These techniques, often grouped under confidential computing or fully homomorphic encryption, allow processing without ever revealing raw inputs.

That capability could transform regulated industries like healthcare and finance. It removes a traditional tradeoff between utility and privacy. For developers, it means reconsidering where sensitive workloads run and how models get trained with external partners. Could this be the breakthrough that lets DeFi protocols tap into AI without exposing user positions? The possibilities are significant.

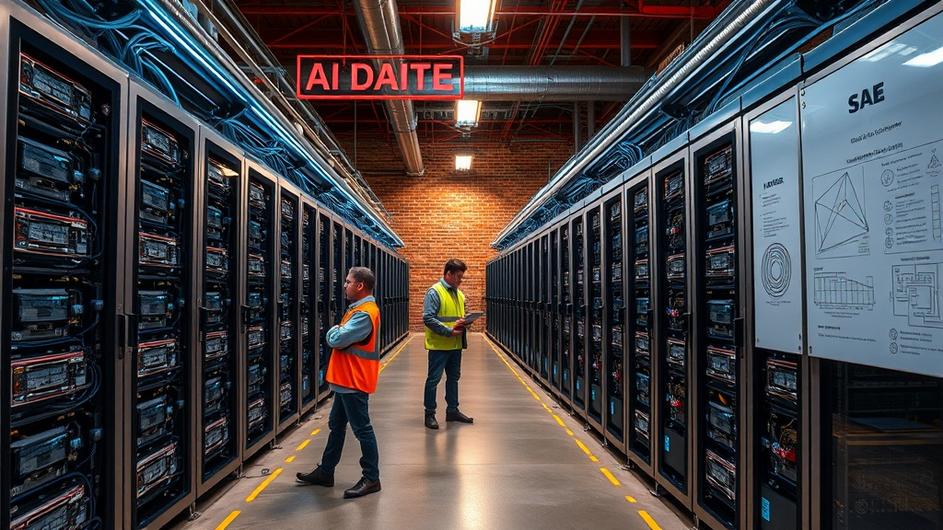

The Grid Problem

As AI models grow, so do their power demands. Data centers hosting AI workloads create wild swings in electricity use. That complicates grid connections and permitting in a big way. One proposed fix is a power buffer, essentially an energy storage and control system that smooths load spikes and helps data centers integrate with the grid faster.

This sounds like an operational detail, and it is. But it has strategic implications. The pace of AI growth will hit a wall if the infrastructure cannot keep up. We already see data center buildouts reshaping energy markets and creating new bottlenecks.

Smarter Hardware, Leaner Math

Hardware innovations promise to ease that constraint on the other side. Sparse computing, which exploits the fact that many neural networks contain a lot of redundancy, swaps dense mathematical operations for leaner alternatives. That reduces memory and energy needs without sacrificing accuracy.

Meanwhile, better models of electron behavior, sometimes requiring quantum-level math, are guiding the next generation of power transmission and semiconductor design. These are not academic footnotes. They are the engineering foundation that will make energy-efficient AI economical at scale.

Beyond Earth and Into Biology

AI is expanding beyond Earth too. Advances now let image processing run directly on satellites, reducing latency and bandwidth requirements while enabling real-time decisions in orbit. Whether the application is environmental monitoring or communications, processing at the edge of space is a reminder that compute location matters more than ever.

And then there is biology. Tools aimed at turning DNA into engineerable code are maturing fast. When life gets treated like software, the demand for rigorous design, validation, and safety practices parallels what we see in robotics and cloud AI. The convergence points to an emerging ethic for builders: complex systems require anticipatory thinking, robust software practices, and hardware that respects the limits of physics and power.

What This Means for Builders

Taken together, these trends point to something like a durable design principle. Scaling intelligence will not be won by algorithms alone. It will be won by integrating safer software, energy-aware hardware, privacy-preserving infrastructure, and manufacturing processes that make reliability cheap.

For developers, traders, and technical leaders, that means investing in fundamentals right now. Memory-safe languages. Power buffering. Encrypted computation. The convergence of power, privacy, and edge AI is not a distant trend. It is the reality engineers are building into today.

We are still early in a decade where labs become factories, satellites become compute nodes, and biological systems become programmable. The decisions engineers make today about safety, energy, and interoperability will shape whether that future feels chaotic, or whether it delivers the practical benefits we hope to see.

Sources

- Video Friday: Figure, 1X Ramp Up Humanoid Robot Production – IEEE Spectrum

- ‘Euphoria’ Season 4 On HBO May No Longer Be Impossible – Forbes

- When Agents Meet Robots: How 2026 Is Redrawing the Map for AI and Automation – TechDailyUpdate

- From Mining Rigs to Model Halls: How Crypto Infrastructure Is Powering the AI Era – TechDailyUpdate

- From Data Centers to Vibe Coding: How 2025’s AI Surge Is Rewriting Software and Infrastructure – TechDailyUpdate

- Edge AI’s Next Leap: How Powerful Collaborations and Infrastructure Are Redefining On-Device Intelligence – TechDailyUpdate

- Power, Privacy, and the Edge: What Claude, Mythos, and Voxtral TTS Mean for the Next Wave of AI – TechDailyUpdate

- From Chip Floors to Factory Lines: How CES 2026 and OpenAI’s Manufacturing Move Signal a New Era for Consumer AI – TechDailyUpdate

- AI at the Edge: Powering Tomorrow’s Cities, Markets, and Innovations – TechDailyUpdate