From Weird Phones to Wearable Reality, MWC 2026 Shows Where Mobile Tech Is Headed

Walking the halls of Mobile World Congress 2026, you couldn’t help but feel something had shifted. This wasn’t just another trade show packed with incremental camera upgrades. It felt more like a crossroads, a moment where the entire device landscape was quietly rearranging itself. Phones, glasses, headsets, laptops, they’re all blurring together, and this year the message was clear: integration is everything, the hardware is finally maturing, and we’re hitting a software moment that turns yesterday’s prototypes into today’s products.

That software moment has a name: Android XR. The platform rollout that kicked off in late 2025 is now the catalyst for concrete plans in spatial computing. For anyone who’s been tracking the mobile momentum and AR space, this is a big deal. XR, or extended reality, is the umbrella term covering everything from virtual reality to augmented reality and every mix in between. Think of it as the toolkit that lets apps place information, characters, or entire interfaces into the world around you, instead of trapping them on a flat screen. With a stable platform finally in place, manufacturers and developers have a shared baseline. That predictability is crucial for hardware makers trying to justify a retail launch and for app teams that need reliable APIs to build on.

You could see that new predictability playing out in a couple of distinct ways on the show floor. Established phone makers weren’t abandoning the handset, far from it. Instead, they were leaning into incremental but genuinely consequential improvements, proving the phone is still the central hub of our digital lives. Xiaomi made waves with its 17 Ultra reveal, a flagship that doubles down on camera partnerships and deep system-level features. It’s a reminder that the photographic arms race and sensor fusion are still where flagship phones push boundaries.

Then there’s the other side of the coin. Companies like Nothing are chasing a completely different ethos with devices like the Nothing Phone 4A Pro. It’s all about distinctive design and a lighter, more playful take on the user interface. As CNET’s analysis of weird phone futures suggests, even when phones feel experimental or strange, that experimentation often feeds back into the broader platform ecosystem. It’s how new ideas get tested.

The second major trend was a growing appetite for hybrid devices, machines that borrow the always-on connectivity of a phone, the spatial awareness of a headset, and the raw compute power of a laptop. Apple’s play here is interesting. The new, colorful, and budget-friendly MacBook Neo represents the other side of the compute equation, lighter, cheaper, and far more power-efficient. Why does a laptop matter to the spatial computing story? Because this is where the heavy lifting happens, from training lightweight AI models at the edge to creating the content for those mixed reality experiences. A thinner, more affordable MacBook shows how vendors are now thinking about distributing compute power across our entire device mix, rather than trying to cram it all into one single powerhouse.

These two forces, the evolving phone and the distributed compute model, converge perfectly when you look at the emerging wearable and spatial products. The announcements and previews at MWC suggested that 2026 won’t be a battle of one single format, but a test of many form factors. Lightweight consumer glasses are making a serious comeback, driven by a simple reality: people want something they can actually wear all day without feeling weighed down or looking like a cyborg.

Snap, for instance, is pushing a new generation of specs aimed squarely at everyday use, not just niche early adopters. These aren’t full-blown XR headsets, they’re more like pocketable displays that layer small, useful bits of information onto your view. For a huge number of users, this kind of device will be the sane, sensible first step into spatial computing. As highlighted in the Glass Almanac’s analysis of 2026’s AR shifts, the surprise winners are often the products that prioritize wearability and specific utility over raw immersion.

At the more immersive end of the spectrum are headsets promising richer spatial presence. But perhaps more intriguing are the new services emerging alongside this hardware. Hologram avatars, AI personalities that inhabit your physical space through AR, have moved from sci-fi concept to realistic product category. Let’s be clear, these aren’t magic beams of light. They’re AI-driven agents rendered in your environment through your glasses or phone camera, capable of guiding you through a task, teaching you a skill, or just keeping you company. For developers, as we’ve explored in pieces on when AI learns to build and AR learns to scale, this represents a completely new UI paradigm. It blends conversational AI, real-time 3D rendering, and contextual environmental sensing.

Why This Actually Matters

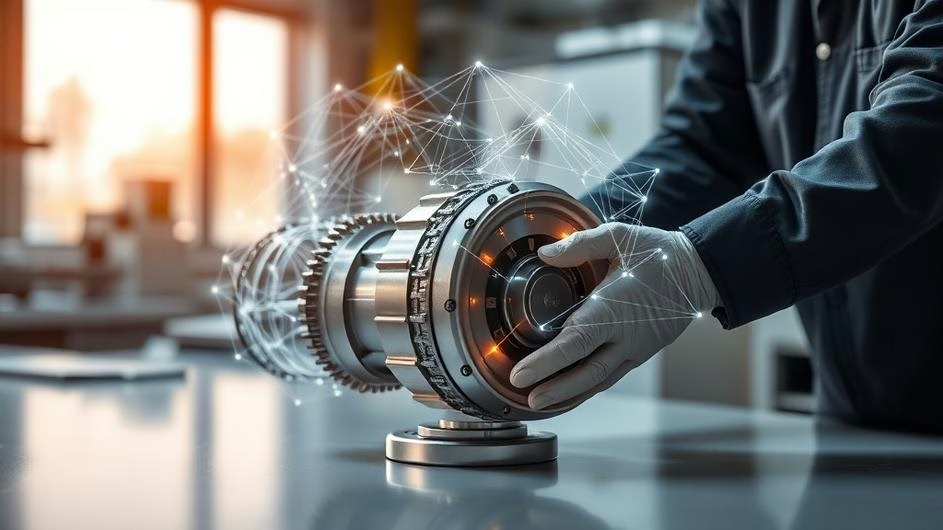

So, who should care about all this, and why? For enterprise users and businesses, the promise is straightforward: productivity gains. Imagine guided maintenance workflows where instructions and diagrams hover right over the machinery, or hands-free information overlays for warehouse pickers. The ROI here can be measured in reduced errors and faster task completion.

For consumers, the first truly useful experiences will likely be social, entertainment-focused, or centered around context-aware assistants that actually justify wearing glasses for more than just fashion. Think about getting real-time translation subtitles during a conversation, or navigation arrows painted onto the sidewalk in front of you. The early enterprise purchases will help fund the incremental hardware improvements we need, while mainstream consumer adoption will likely start with these lightweight, socially acceptable devices.

But let’s temper the excitement with some practical reality. The hardware is, in many cases, arriving faster than the software use cases that can sustain long-term engagement. The platform momentum from Android XR and related mobile innovation helps enormously, but ultimate success hinges on compelling apps, decent battery life, comfortable ergonomics, and developer tools that actually scale. This is where cross-device thinking becomes critical. Your phone remains your primary camera and communication device. Your laptop is your creation hub. Your glasses or headset become the place where ambient computing lives, quietly in the background. The companies that figure out how to map workflows seamlessly across these devices, rather than just pitching another single-purpose gadget, are the ones who’ll find real product-market fit.

MWC 2026 also revealed a subtler trend that deserves more attention: design and personality are making a comeback. Xiaomi’s partnership with Leica for a special Leitzphone camera edition, and Nothing’s unwavering aesthetic-first approach, show that consumers still deeply care about how technology feels in their hand, not just what it does on a spec sheet. The MacBook Neo’s colorful palette and approachable pricing make serious compute power feel less intimidating and more inviting. These are important signals for developers building the next wave of apps. If the hardware feels friendly and approachable, users will be more willing to experiment with new interaction models, giving developers the freedom to build richer, more experimental experiences.

What Comes Next?

Looking ahead, the immediate battle won’t be glasses versus headsets versus phones. It’ll be between the ecosystems that can integrate all these things smoothly. Expect a flood of more hybrid products and services that deliberately blur device boundaries. The winners in this space will be those who deliver coherent cross-device experiences, set sensible privacy defaults for the always-on sensors these devices require, and build developer platforms that drastically reduce the friction of building spatial apps.

In short, MWC 2026 wasn’t about finding the one killer device. It was about watching the rules of engagement change right in front of us. Phones aren’t disappearing, but their role is evolving from solitary command centers to intelligent nodes in a larger, more connected hardware network. Lightweight AR glasses will gradually bake spatial features into daily life. Headsets will handle the deeper, more immersive experiences. And laptops will continue to provide the necessary horsepower for creation and complex tooling.

For developers, investors, and tech leaders watching the 2026 hardware and AR landscape, the path forward is becoming clearer, even if the detailed map isn’t fully drawn yet. The strategy is to invest in cross-device thinking, prioritize user privacy and physical comfort (ergonomics matter more than ever), and understand that the first practical XR wins will come from integrated software experiences that make life simpler, not more complicated.

We’re at an inflection point where improved design, platform stability, and smarter compute distribution are aligning to make spatial computing genuinely practical. The question isn’t whether our future will be augmented, it’s how quickly and smoothly the ecosystem can stitch itself together. The next 24 months will determine which of these new formats fade into the background as invisible utilities and which remain novelty gadgets. For anyone building what comes next, that’s an incredibly exciting, if challenging, place to be.

Sources

- 7 AR Products And Shifts Revealing 2026’s Surprise Winners – What Changes, Glass Almanac, Mon, 02 Mar 2026

- Xiaomi’s 17 Ultra Mobile World Congress 2026 Reveal, CNET, Mon, 02 Mar 2026

- The Future of Phones Is Weird, CNET, Sat, 07 Mar 2026

- Apple Gets It Right! Hands-on with MacBook Neo, CNET, Fri, 06 Mar 2026

- Nothing Phone 4A Pro First Look, CNET, Fri, 06 Mar 2026