Huawei’s UCM Software Accelerates AI Inference and Reduces Hardware Reliance

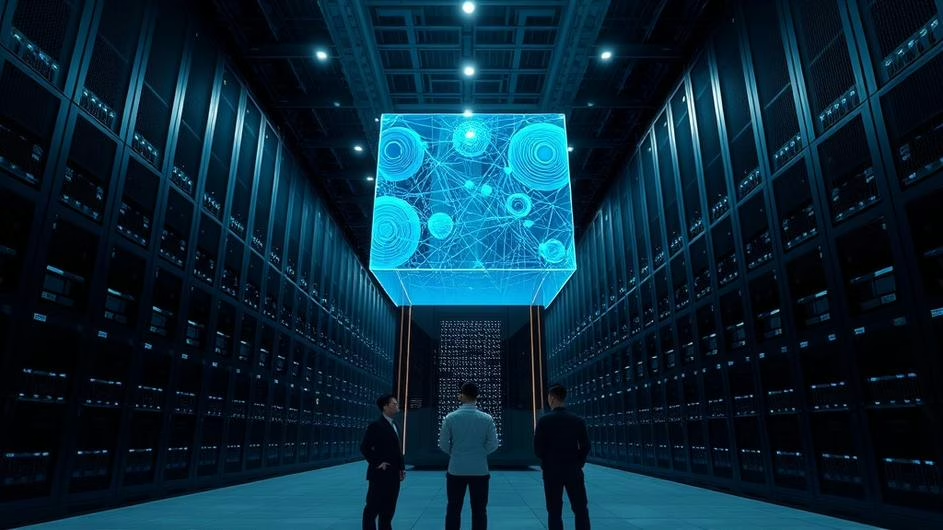

Picture this: massive data centers humming with thousands of servers, each one burning through electricity like there’s no tomorrow, all in the name of AI progress. It’s been the standard playbook for years. Want better AI performance? Add more chips. Need faster inference? Stack more hardware. But what if I told you there’s a company quietly flipping this script on its head?

Huawei just dropped something that’s got the tech world buzzing. Their Unified Computing Module (UCM) isn’t just another piece of silicon – it’s a complete rethink of how we approach AI infrastructure. And honestly? The implications are pretty mind-blowing.

The Hardware Hunger Games

Let’s be real about the current state of AI infrastructure. It’s like feeding a digital monster that never gets full. Every breakthrough in AI capabilities seems to demand exponentially more computing power, more specialized chips, and frankly, more everything. Data centers are already bursting at the seams, and the electricity bills? Don’t even get me started.

The traditional approach has been throwing hardware at the problem. Need to run multiple AI models? Deploy separate servers for each one. Want faster processing? Add more accelerators. It’s a strategy that works, sure, but it’s also incredibly wasteful. We’re talking about servers sitting idle for hours, processing units that are underutilized, and cooling systems working overtime to keep everything from melting down.

Enter the Game Changer

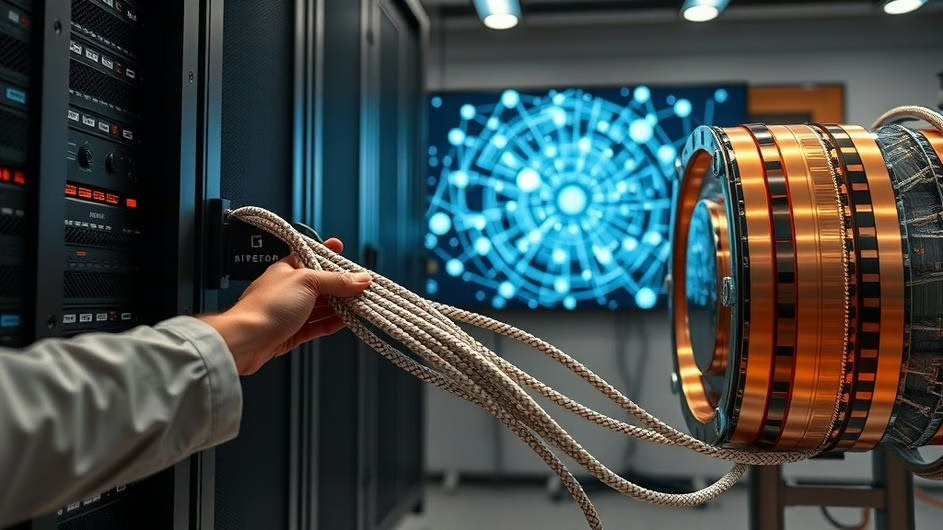

This is where Huawei’s UCM gets interesting – and I mean really interesting. Instead of playing the “more hardware” game, they’ve engineered something fundamentally different. According to Tom’s Hardware, Huawei’s UCM software claims up to 22x throughput gain and 90% latency reduction for traditional cache hierarchies in AI workloads.

Here’s what makes the UCM special: it’s built around Huawei’s Ascend AI processors, but the real magic happens in how everything’s orchestrated. Think of it as having a brilliant conductor managing an entire symphony orchestra, ensuring every instrument plays at exactly the right moment. No wasted notes, no idle musicians.

The UCM creates a unified pool of computing resources that can be dynamically allocated based on actual needs. Running a computer vision model that needs heavy matrix calculations? The system automatically assigns the right cores. Switching to natural language processing? It seamlessly redistributes resources without missing a beat.

Breaking Down the Technical Wizardry

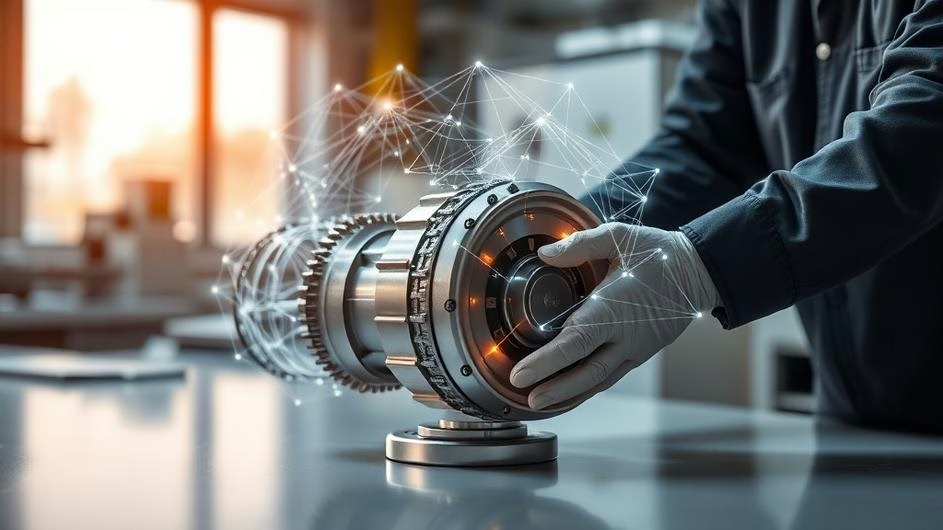

What really gets me excited about this technology is the software-hardware synergy. The UCM doesn’t just rely on better chips – it’s the entire ecosystem working in harmony. The MindSpore AI framework and Ascend-specific libraries create this tightly integrated environment where every operation is optimized.

As reported by South China Morning Post, Huawei’s algorithm could significantly reduce reliance on foreign memory chips, which has massive implications beyond just efficiency gains.

The Tensor Cores in the Ascend processors are specifically designed for the matrix multiplication operations that form the backbone of neural networks. Couple that with high-bandwidth memory interfaces and sophisticated interconnects, and you’ve got a system that minimizes those pesky data transfer bottlenecks that usually kill performance.

But here’s the kicker – Huawei’s also integrating model compression and quantization techniques directly into their software stack. This means AI models can run with reduced precision requirements without losing accuracy, essentially squeezing more performance out of the same hardware.

The Real-World Impact

Now, let’s talk about what this actually means for businesses and developers. The cost savings alone are staggering. We’re looking at dramatically reduced capital expenditure because you simply don’t need as many physical servers. But the operational savings? That’s where things get really interesting.

Lower power consumption translates directly to reduced electricity bills and cooling costs. For companies already struggling with cybersecurity and infrastructure challenges, this kind of efficiency gain is a game-changer.

The space optimization benefits are huge too, especially for edge computing scenarios where every square inch matters. Imagine deploying powerful AI capabilities in environments where traditional server racks simply won’t fit. IoT and network optimization applications suddenly become much more feasible.

Democratizing AI Access

Here’s something that really excites me: this technology could genuinely democratize AI access. When the barrier to entry drops – both in terms of upfront costs and operational complexity – suddenly smaller companies can play in the same league as tech giants.

AI marketing automation and efficiency tools that were once only accessible to enterprises with massive budgets could become standard for mid-sized businesses. Startups working on innovative AI solutions won’t need venture capital just to afford the infrastructure.

Huawei has announced this AI inference innovation as part of their broader strategy to challenge traditional computing paradigms, and frankly, it’s about time someone did.

The Environmental Angle

Let’s not forget the environmental impact here. Data centers already consume about 1% of global electricity, and that number’s only growing. Huawei’s algorithm that reduces chip dependency isn’t just about geopolitics – it’s about sustainability too.

When you can achieve the same AI performance with significantly less hardware, you’re directly reducing the carbon footprint of AI operations. That’s not just good business; it’s responsible technology development.

Looking Forward

What’s particularly intriguing is how this fits into the broader landscape of AI agents and DevOps engineering. As AI systems become more sophisticated and autonomous, the efficiency gains from technologies like UCM become even more critical.

Industry analysts suggest this memory software could relieve pressure on China’s chip industry, but the implications extend far beyond any single country’s tech sector.

The shift toward leaner, more efficient AI infrastructure isn’t just a nice-to-have anymore – it’s becoming essential for sustainable growth in the AI sector. Companies that can deliver more intelligence per watt, more insights per dollar, and more innovation per square foot of data center space will have a significant competitive advantage.

Huawei’s UCM represents more than just a technical achievement. It’s a glimpse into a future where AI power doesn’t have to come at the cost of environmental sustainability or economic accessibility. And honestly? That future can’t come soon enough.