Apple, AR, and the AI Hardware Wave: How 2026 Is Rewiring the Device Landscape

Here’s something you might have noticed if you’ve been tracking tech releases lately. Apple’s spring product cadence is colliding with a whole ecosystem of hardware innovation, and the result is pretty clear. We’re seeing two powerful ideas take shape: cheaper access to capable devices, and smarter, more autonomous hardware. In just a few weeks, we’ve watched flagship refinements from Cupertino, bold price experiments, a fresh crop of consumer AR glasses, and a serious push to pull AI out of the cloud and stick it directly into local devices.

What does this mean? It signals a shift from speculative concepts to pragmatic, roll-up-your-sleeves product launches. For developers watching this space, it’s time to pay attention. The way platforms, sensors, and on-device intelligence are evolving will fundamentally change what apps can do, and who they can reach.

Apple’s Two-Track Playbook: Refinement and Reach

Let’s start with the elephant in the room, or rather, the fruit on the desk. Apple continues to iterate at a pace that’s hard to ignore. The new iPad Air with the M4 chip landed with what many are calling unusual decisiveness. Reviewers praised it as Apple’s best overall tablet, full stop. The company stuck with a proven display formula while seriously upgrading the silicon underneath. That pattern tells a story. It prioritizes real-world performance gains over flashy, headline-grabbing design changes.

But that’s only one strand of Apple’s 2026 strategy. The other is experimentation at the market’s lower end. Enter the rumored MacBook Neo, reportedly priced at an aggressive $599. This isn’t just another spec bump. It shows Apple is willing to test new price points and even novel manufacturing techniques, like 3D-printed aluminum mentioned in prototype reports. These moves aren’t happening in a vacuum. They form a coherent, two-track playbook: refine the premium experience for power users while dramatically expanding reach for both developers and everyday consumers. It’s a classic case of where phones hold the key and prices tell the story.

And if the leaks are right, Apple isn’t done with spring. Rumors point to a new macOS desktop for professionals, a low-cost tablet, and a streaming device. The industry whisper network also hints at a smart home display that blends HomePod audio with iPad-like visuals. The timing of that last device seems tied to Apple’s long-awaited, AI-enabled version of Siri, which the company has been carefully tempering before a broad rollout.

The upshot for developers? A future where Siri has more contextual smarts, sitting at the center of a family of connected screens and speakers. Apps that seamlessly integrate voice, audio, and continuity across this device ecosystem will find entirely new opportunities.

The AR Market Wakes Up (For Real This Time)

Step outside of Cupertino, and you’ll find other hardware makers moving just as fast. The AR market is stirring in ways that finally feel tangible for consumers, not just for R&D labs. A whole crop of 2026 AR glasses are surfacing, ranging from lighter, social-first designs to stereo displays built for gaming and media. Some products are all about wearability and simple phone pairing. Others are trying to recreate cinema-scale immersion on a head-worn display.

Technical advances, like discussions around Apple’s Liquid Glass approach to optics, are entering public conversation. This matters because optics ultimately determine image quality and comfort. For developers, this shift is critical. AR is no longer an exotic API experiment or a niche developer toy. It’s becoming a mainstream interaction surface. That means new UI patterns, efficient 3D asset pipelines, and privacy-aware sensor handling aren’t just nice-to-haves. They’re becoming core competencies. This is part of the broader mobile wave that’s rewriting devices, cameras, and AR.

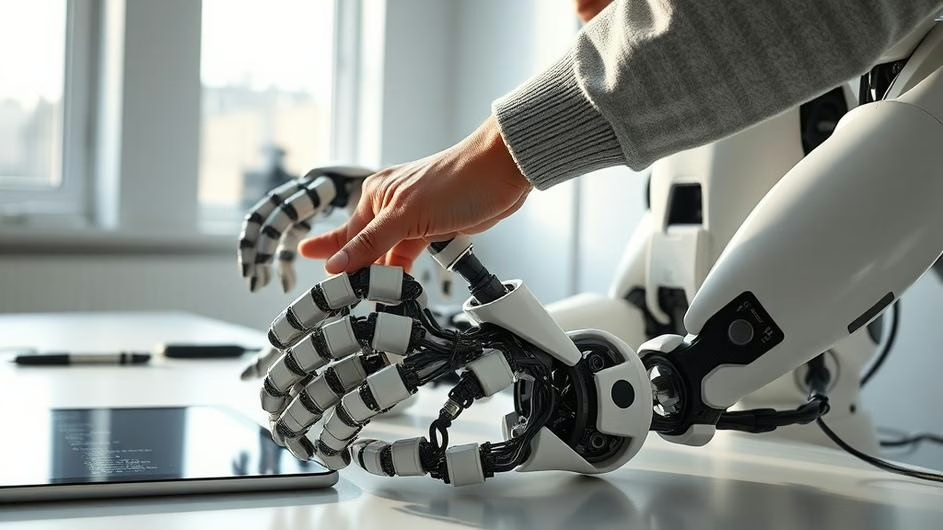

AI Leaves the Cloud, Moves Into Your Pocket (and Your Robot)

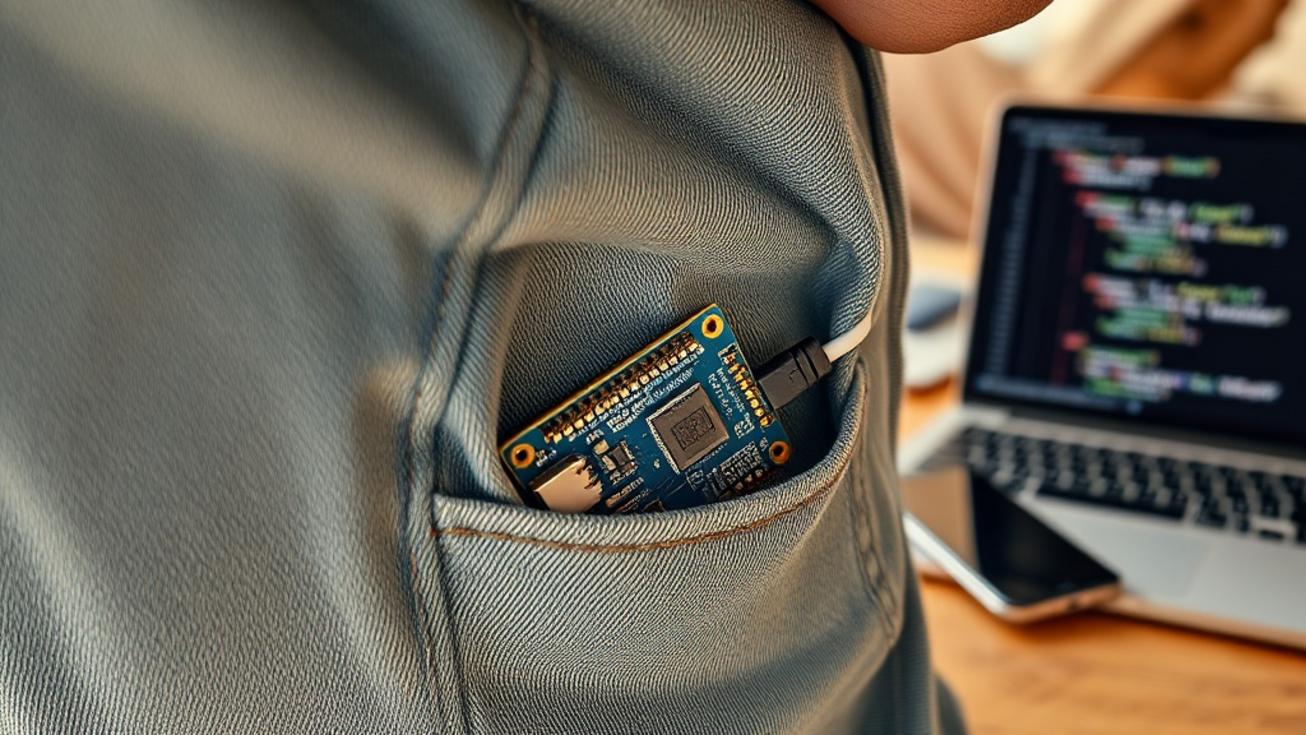

Running parallel to the race for the best visual wearable is a quieter, but equally important, push to decentralize AI. Take Qualcomm’s new Arduino Ventuno Q single-board computer as an example. This little device fuses microcontroller simplicity with legit on-device AI and robotics capabilities. It lets hobbyists and product teams run inference locally, without that constant, latency-inducing back-and-forth with the cloud.

Single-board computers are those compact, affordable circuit boards that can control motors, sensors, and interfaces. When they pack dedicated AI silicon, they open the door for responsive, privacy-conscious robotics and edge applications. For developers building smart cameras, interactive installations, or any project where connectivity might be flaky, this means prototyping cycles get shorter and deployments become more robust. It’s a concrete example of how AI is moving from cloud models to the physical world.

Software Giants Bake AI Deeper Into Everything

Unsurprisingly, the software giants are following the same logic, embedding AI deeper into the fabric of productivity and operations. Microsoft talks about ‘hiring’ AI agents, automated assistants that can complete multi-step tasks across different services. Google is placing its Gemini model more prominently into Docs, Sheets, and Slides, making context-aware drafting and analysis part of the everyday work grind.

For small business tech, the implications are immediate. Vendors are tailoring AI-powered ERP and fintech tools to specific verticals like construction or retail. Even platforms like X are preparing new financial services, as noted in recent small business tech news. These services will reshape what users expect from every app, creating new integration points and challenges for developers, from secure credential flows to complex agent orchestration. It’s all part of the movement of AI out of the cloud and into real-world workflows.

A Quick Reality Check on Hardware Quality

Not every product launch sails smoothly into the sunset, of course. Dell’s refreshed XPS 14 serves as a recent reminder. Even mature, well-regarded laptop lines can hit unexpected quality snags, with a small batch of units reportedly experiencing typing issues. The lesson here is practical for everyone. Hardware can ship with edge cases that matter deeply to both users and developers. A device that subtly interferes with input or sensor accuracy can break core assumptions in otherwise solid software. In a market moving this fast, attention to rigorous QA and cross-platform testing remains a serious competitive edge.

What This Means for Builders and Businesses

So, where does this leave developers, product leaders, and tech-focused businesses? The next 12 months look to be about making pragmatic, forward-looking choices.

It’s time to invest in modular UI systems that can scale gracefully from phone screens to AR overlays. Design your APIs to tolerate intermittent input and sensor variance, because the edge isn’t always perfectly connected. Start planning for agent-driven workflows that might handle tasks currently managed by human operators. And perhaps most importantly, treat privacy and latency as first-class design constraints, not afterthoughts. On-device intelligence wins when it can act quickly and keep data private.

Looking beyond the constant churn of new model leaks and launch events, the deeper change is structural. Devices are converging into a cohesive ecosystem of displays, sensors, and local AI. That convergence is rewriting user expectations around responsiveness, personalization, and trust. Developers who build for this hybrid reality, who prioritize resilient integrations across both cloud and edge, will be the ones best positioned to turn these hardware waves into lasting, successful products. This is the essence of the 2026 device moment we’re entering.

The question isn’t really if these trends will affect your work. It’s how quickly you’ll adapt to them.

Sources

External References:

- The Morning After: The new iPad Air M4 is Apple’s best overall tablet, Engadget, Tue, 10 Mar 2026

- 7 AR Glasses In 2026 That Reveal Price, Leaks, And One Surprising Feature, Glass Almanac, Mon, 09 Mar 2026

- Apple’s not done: 3 more products coming this spring, Cult of Mac, Sun, 08 Mar 2026

- Small Business Technology News: Musk: X Money Launching In April, Forbes, Sat, 14 Mar 2026

- Apple ‘Ultra’ Products Expansion Is Up Next After MacBook Neo Launch, Bloomberg, Sun, 08 Mar 2026

Further Reading on Tech Daily Update:

- 2026 Hardware and AR: Where Phones Hold the Key and Prices Tell the Story

- How 2026’s Mobile Wave Is Rewriting Devices, Cameras, and AR

- 2026 Device Moment: Apple’s Product Blitz, the AR Glasses Surge, and What It Means for Developers

- Agents, Glasses, and Sensors: How AI Is Moving From Cloud Models to the Physical World

- From Robots to Renegotiated Software: How AI Is Moving Out of the Cloud and Into the Real World